The Mediterranean diet is often hailed as a gold standard for health, but its true impact depends on one critical factor: long-term adherence. Without addressing the barriers that prevent lasting…

Generative AI in SciComm: Bridge, Wall, or Both?

A guide to getting “the good” from generative AI while understanding and dodging “the bad.”

by Apeksha Srivastava

Picture this: A Google search result explains black holes as “huge concentrations of matter packed into very tiny spaces” (NASA, n.d.). According to another description, “all the mass in a black hole is concentrated in a tiny region, surrounded by a boundary called the event horizon …” (Center for Astrophysics, n.d.). Wouldn’t this information be intimidating to a curious 12-year-old? Now, try asking ChatGPT to ‘explain a blackhole in simple words.’ I received the following response: “Imagine [a black hole] like a super-powerful vacuum cleaner that pulls everything around it in and doesn’t let anything out … black hole forms when a star collapses in on itself … the area around it becomes like a point of no return … once something crosses this point, it can’t escape, and we can’t see or detect it anymore, hence, the term ‘black’ hole.” My 12-year-old cousin found the vacuum cleaner analogy relatable and approachable. He not only appeared to grasp the general sense of the term but also found it fascinating! As a doctoral student in science communication, I witnessed firsthand how generative artificial intelligence (AI) can serve as a link between science and curiosity.

Generative AI can both bridge and block effective science communication. Recognizing its benefits, pitfalls, and safeguards is crucial to using it responsibly. This article outlines the positives and negatives of generative AI, its mode(s) of communication, and what its responsible use in science communication looks like.

ChatGPT, an AI chatbot, was released by OpenAI in November 2022. Another tool, Perplexity AI, an AI-powered answer engine, was launched in December 2022. Google released its AI chatbot Gemini in March 2023. These AI tools learn specific patterns from the information they are provided. This training helps them generate responses based on instructions. Several studies have highlighted the benefits of AI chatbots in science-related communication.

Gao and colleagues (2023) provided ChatGPT with the titles of some published research papers and asked it to generate research abstracts (scientific summaries). They found that the tool wrote convincing summaries. The paper proposes using AI carefully, combined with a researcher’s knowledge, for writing and formatting science pieces. When used cautiously, ChatGPT can assist with research and communication by creating outlines and improving writing speed and style (Lubiana et al., 2023). It may also aid researchers in overcoming language barriers (Lenharo, 2024). Generative AI enhances interdisciplinary collaboration and brainstorms suggestions (Pu & colleagues, 2024). Prompting AI tools to assume roles (e.g., microbiologist, computer scientist, etc.) can help frame specific inquiries that reduce response ambiguity and increase accuracy. With proper instructions, AI chatbots can provide tailored explanations and enhance public access to science. This would be an ideal connection between science and society, right?

AI can Mislead Confidently

But what about the other side of the coin? I asked ChatGPT to provide summaries of ‘Painter of Signs’ and ‘The Dark Room’, written by the Indian author R. K. Narayan. The tool provided incorrect names for the main female characters: Dinakara instead of Daisy and T. Lakshmi instead of Savitri. In response to my prompt, “You provided wrong names of female protagonists,” it said, “Here’s the correct information …” but still provided the wrong names, Rama and Sarla. This incident shows that ChatGPT can present confident errors. Such misguiding information may have a significant impact, for example, on the explorations of a literature student.

ChatGPT can generate different (and incorrect) responses to multiple prompts within the same chat thread. It appears that AI tools do not truly understand the essence of the domain, but rather attempt to mimic it based on the training data. This mimicry may generate confident-sounding information that is either a combination of true and false statements or, in the worst case, is completely falsified. This phenomenon is termed “hallucinations” and refers to false perceptions that excessively worry researchers. For example, summaries with “believable numbers” generated by AI tools could be used to falsify research (Gao et al., 2023).

Emsley (2023) asked ChatGPT to check one incorrect reference for his study. Although he received an apology, the tool’s subsequent “correct” reference was also incorrect. This behavior is similar to my conversation with the tool. Based on this experience, Emsley disagreed with the term “hallucinations” and insisted on the terms “fabrications” (made-up data; e.g., Franklin, 2024, used Gemini) and “falsifications” (data manipulation). Alkaissi and MacFarlane (2023) also emphasized that ChatGPT can generate a combination of factual and fabricated data, questioning the tool’s accuracy and integrity in academic writing. Siontis and colleagues (2024) stated that AI chatbots can provide seemingly credible but highly misleading responses (e.g., non-existent research papers).

Generative AI has been labeled a black box (Lenharo, 2024). This label indicates our inability to understand how these tools make decisions, which also includes hallucinations and fabrications. Addressing such falsifications may lead to more productive research planning and effective science communication.

Practical Checklist

Use generative AI to generate ideas, create analogies, and develop outlines.

Do not rely on it for unverified facts, citations, or domain-specific accuracy.

Always link to original sources, run fact checks, and keep a human in the loop.

Gen-AI often shows a One-Way ‘Deficit’ Communication Behavior

The deficit and dialogue models represent two contrasting approaches to communication. The former assumes audiences as passive receivers and that transmitting factual information would ‘fill the gap’ in the public understanding of science. For example, vaccine awareness campaigns in newspapers often employ the deficit model, assuming that transmitting facts and statistics will lead to better vaccine uptake. The dialogue model emphasizes two-way communication, recognizing that the audience has valuable perspectives and questions to contribute. For example, the “Be COVID Wise” series, launched by the Indian Scientists’ Response to COVID-19 in 2020, featured scientists engaging in conversations with the public. This model fosters trust by acknowledging the public as active participants in science-related discussions.

Generative AI may often follow the deficit model of communicating science. Although model behavior varies with prompts and versions, ChatGPT generally assumes the role of an expert when asked to provide information. In response to my prompt, “I have seen you follow the deficit model of science communication,” it said, “You’re right to notice that … I’m designed to provide clear, accurate information when someone asks a question, which naturally leans toward transmitting facts. If I don’t have enough context about what you already know, value, or believe, I might default to simply informing, rather than engaging.” I received a similar response from Perplexity AI: “… A system like this often provides direct, one-way explanations, which do resemble traditional deficit-style communication where information flows from the expert (in this case, an AI) to the user. Many digital tools, including AI, have been designed with the assumption that users are primarily seeking factual information and knowledge, which itself can reflect elements of the deficit model.”

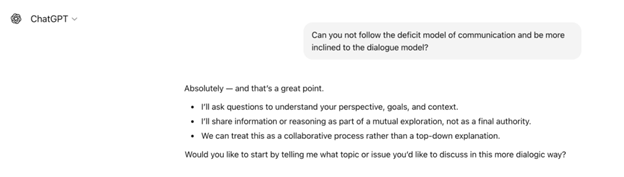

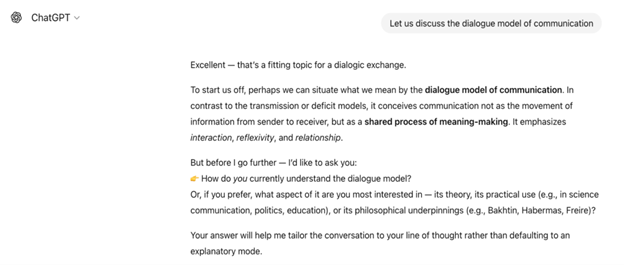

Furthermore, based on my and Emsley’s (2023) experiences (mentioned previously), where ChatGPT repeatedly provided incorrect information by ignoring users’ questions or suggestions, I argue against the preference of such tools for a dialogue model, unless clearly instructed. Aligning with this idea, Volk and colleagues (2025) reported that although ChatGPT acknowledged that science communication is a diverse field that extends beyond conveying facts to the public, it still listed one-way information transmission formats, such as storytelling or education. Moreover, the researchers noted that the science depiction by ChatGPT is inclined toward the hard sciences, such as biology, chemistry, and physics. One possible reason could be the biases associated with its training data. AI tools are designed to respond in real-time, which limits the possibility of engaging in a genuine dialogue. These aspects require careful consideration and should incorporate insights from fields such as the humanities and social sciences. We can observe from the following screenshots that the AI tool started focusing on my preferences and interests rather than just disseminating information:

Image provided by the author.

Provided by the author.

In reality, science communication never occurs in a vacuum and is much more complex, with multilayered factors such as culture, values, and experiences, among others. This aspect is not only limited to information recipients but also covers information providers. According to Kearns (2021), “… who creates and disseminates knowledge, including the languages they use, affects not only what is considered valid but also who influences the questions that are asked and the benefits resulting from knowledge” (p. 173). Unless very carefully guided, generative AI cannot adjust its responses to align with cultural or emotional contexts and engage in true two-way meaning-making. Furthermore, although ChatGPT can attempt to simulate empathy, it has yet to truly achieve the ability to understand and share others’ feelings (e.g., Cerf, 2025). Empathy is critical in science communication as it helps build trust. Such elements (or their absence) are bound to influence the information that generative AI imparts to the user.

Centering the Humans, Not Replacing Them!

Who is at the center, providing meaning and authority, when generative AI is involved in science communication? It appears that these tools have begun to displace the human expert. However, although the power dynamics are shifting, generative AI still lacks the ability to possess ‘real’ lived experiences, empathy, and accountability that are critical components in effective science communication (e.g., Mazurek, 2025). Nonetheless, these tools are learning and improving rapidly, which makes one think: What would happen when AI starts grasping this ability as well?

Since AI interprets and responds to questions based on its training data, it may reflect and reinforce societal inequalities and exclusions (e.g., Kessler et al., 2025). In simple terms, AI responses may include, for example, wellness options focused on the metabolism of a specific population, excluding others due to a lack of sufficient training data. Due to such biases, it cannot present perfectly balanced information. Excessive reliance on AI can also lead to the ‘normalization’ of oversimplifying information, omitting nuance, and overlooking contradictions. Nonetheless, AI also has the potential to look beyond Western perspectives in science communication if prompted appropriately and verified cautiously. Becoming aware of how one’s personal and collective experiences are embedded in these systems opens spaces for more inclusive and accountable development.

Hence, it is worth exploring: Who really is at the center and the margins in this field? Who is really speaking and has the authority: human or machine? Or is it the reader, whose interpretation ultimately gives meaning to the explanation? The growing use of AI tools in science communication has begun to blur the boundaries of authority. The complexity of science communication is increasing with this network of human and algorithmic voices, each influencing how information is formulated, transferred, received, and interpreted.

So, Bridge, Wall, or Both?

The million-dollar question: Is generative AI the bridge that facilitates or the wall that hinders science communication? Well, it can be both—a bridge that provides access to the wonders of science, and a wall that spreads false information. A recent study by researchers from the Massachusetts Institute of Technology also preliminarily revealed decreased brain activity in individuals who used generative AI (Kos’myna, n.d.). The researchers encouraged further exploration of how AI can impact learning skills. Ultimately, it becomes our responsibility to remain critical. We should not trust AI blindly. Let us question ourselves: Is generative AI, with its approachable ways of communicating science topics, really making our lives easier? Are we certain there are no hidden traps? Our goal should be to get “the good” from generative AI while understanding and dodging “the bad.”

Key Takeaways

Define clear objectives in AI prompts regarding what the user wants to achieve.

Understand that generative AI is not infallible.

Double-check AI-generated content for accuracy, relevance, and context.

References

Alkaissi, H., & McFarlane, S. I. (2023). Artificial hallucinations in ChatGPT: Implications in scientific writing. Cureus, 15(2), Article e35179.

Asian News International. (2023). R.K. Narayan death anniversary special: Revisit some of his remarkable works. ThePrint. Online.

Center for Astrophysics. (n.d.). Black holes. Harvard & Smithsonian.

Cerf, E. (2025). AI chatbots perpetuate biases when performing empathy, study finds. UC Santa Cruz News. Online.

Emsley, R. (2023). ChatGPT: These are not hallucinations—they’re fabrications and falsifications. Schizophrenia, 9(1), 52–53.

Franklin, G. M. (2024). Google’s new AI Chatbot produces fake health-related evidence-then self-corrects. PLOS Digital Health, 3(9), Article e0000619.

Gao, C. A., Howard, F. M., Markov, N. S., Dyer, E. C., Ramesh, S., Luo, Y., & Pearson, A. T. (2023). Comparing scientific abstracts generated by ChatGPT to real abstracts with detectors and blinded human reviewers. NPJ Digital Medicine, 6(1), Article 75.

Indian Scientists’ Response to COVID-19. (2020). Conversations in the times of COVID-19. Press Release.

Kearns, F. (2021). Getting to the heart of science communication: A guide to effective engagement. Island Press.

Kessler, S. H., Mahl, D., Schäfer, M. S., & Volk, S. C. (2025). All eyes on AI: A roadmap for science communication research in the age of artificial intelligence. Journal of Science Communication, 24(2), Y01.

Kos’myna, N. (n.d.). Your brain on ChatGPT: Overview. MIT Media Lab.

Lenharo, M. (2024), ChatGPT turns two: How the AI chatbot has changed scientists’ lives. Nature, 636, 281–282.

Lubiana, T., Lopes, R., Medeiros, P., Silva, J. C., Gonçalves, A. N. A., Maracaja-Coutinho, V., & Nakaya, H. I. (2023). Ten quick tips for harnessing the power of ChatGPT in computational biology. PLoS Computational Biology, 19(8), Article e1011319.

Mazurek, M. (2025). Limitations of artificial intelligence: Why artificial intelligence cannot replace the human mind. Filozofia i Nauka, 97–111.

NASA. (n.d.). Black holes. NASA Science: Universe.

OpenAI. (2025). ChatGPT (GPT-4o) was used in this article’s examples [Large language model].

Pu, Z., Shi, C. L., Jeon, C. O., Fu, J., Liu, S. J., Lan, C., … & Jia, B. (2024). ChatGPT and generative AI are revolutionizing the scientific community: A Janus‐faced conundrum. Imeta, 3(2), Article e178.

Siontis, K. C., Attia, Z. I., Asirvatham, S. J., & Friedman, P. A. (2024). ChatGPT hallucinating: Can it get any more humanlike? European Heart Journal, 45, 321–323.

Thwaite, A. (1976). The painter of signs (by R. K. Narayan). The New York Times, 189.

Volk, S. C., Schäfer, M. S., Lombardi, D., Mahl, D., & Yan, X. (2025). How generative artificial intelligence portrays science: Interviewing ChatGPT from the perspective of different audience segments. Public Understanding of Science, 34(2), 132–153.

About the Author

A writer by passion and a researcher by education, Apeksha Srivastava is currently a doctoral candidate at the Indian Institute of Technology Gandhinagar, Gujarat, India. She was a visiting researcher at the University of Colorado, Colorado Springs, USA, from April to July 2024. Her research area lies at the intersection of science communication and psychology, and she enjoys reading and listening to music during her free time.